With more than 100GW of new AI data centre capacity expected between 2026 and 2030, operators are discovering that traditional AC energy infrastructure can’t scale alongside megawatt‑class GPU racks. In AC power chains, 10-30% of all incoming energy is lost as heat before it ever reaches a GPU, an inefficiency many operators now call the conversion tax. The industry is rapidly moving toward 800–1500V DC power distribution because AI racks are outgrowing what traditional AC infrastructure can support. Dafna Granot, Meir Adest and Igor Morozov report

The rapid expansion of AI data centres is severely constrained by power generation and grid capacity. As operators prioritize maximizing usable compute per megawatt, legacy AC-powered infrastructure has become a critical bottleneck. Traditional AC-powered systems impose a ‘Conversion Tax’”’ – wasting an estimated 10–30% of input power through multiple conversion stages while struggling to handle the thermal and density demands of next-gen GPU racks approaching 1 MW each[1]. To overcome these constraints, the industry is accelerating its transition toward 800V direct-current (DC) architectures to minimize cabling bulk, drastically reduce conversion losses, and enable unprecedented power densities.

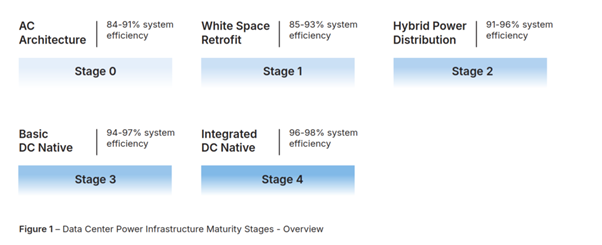

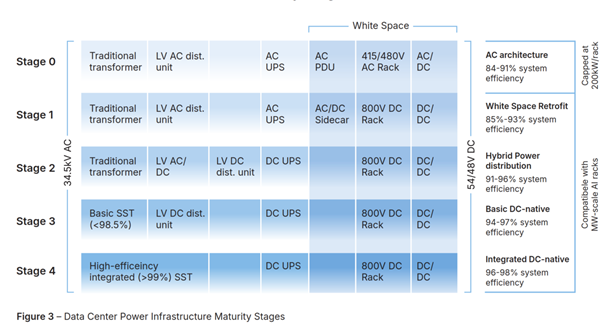

To navigate this transition, this paper introduces a five-stage maturity framework for data center power architectures, evolving from legacy AC setups (Stage 0) to an ultimate Integrated DC-native solution (Stage 4). This framework maps the industry’s potential progression through intermediate retrofit and hybrid models, which incrementally reduce AC dependencies but still carry significant system complexity and conversion penalties. Ultimately, it demonstrates that maximizing compute density requires advancing past early DC-native designs (Stage 3) to a fully streamlined Stage 4 architecture.

SolarEdge is engineering a holistic, Stage 4 power platform position to directly connect medium voltage (MV) lines to the DC bus. At its core is an ultra-high efficiency (>99%) Solid State Transformer (SST), paired with a highly configurable native DC-UPS and an intelligent DC-power distribution layer. By eliminating redundant AC-DC-AC conversion cycles, step-down transformers, and separate low-voltage units, this integrated architecture is aimed to achieve a groundbreaking total system efficiency of up to 98%-reclaiming previously wasted power and transforming it into revenue-generating compute.

Transitioning to an 800V DC architecture requires unparalleled expertise in high-voltage DC system design, real-time management, and massive operational scale. Drawing on two decades of leadership in power electronics, SolarEdge naturally adapts advanced distributed energy technologies to meet the rigorous safety and control demands of AI workloads. Backed by the real-time monitoring of millions of DC-coupled systems, over 140 million units deployed worldwide and operational track record of 60GW+, SolarEdge is uniquely positioned to deliver this highly efficient, gigawatt-scale infrastructure on time.

Power delivery: the bottleneck of the AI data centres

The AI Boom is triggering an unprecedented infrastructure investment, with >100GW of AI data centers expected to be built between 2026–2030[2]. Generation and transmission constraints limit new data center buildout, with substantial power shortfall expected in coming years. As a result, grid connection capacity is increasingly the gating factor for data center expansion. This is pushing operators toward approaches such as on-site generation and more flexible interconnection/contracting models. Still, the top priority remains maximising usable compute per megawatt of grid capacity.

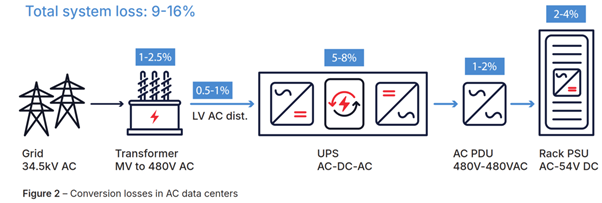

One of the most direct ways to improve compute utilisation is to reduce power conversion losses. In traditional AC power chains, electricity typically passes through 5–7 conversion stages from grid to GPU, with an estimated ~10–30% of input power lost as heat from generation to end use. In today’s power-constrained environment, data center operators can no longer afford this ‘Conversion Tax –a material penalty on both capacity (less power reaches IT load) and cost (more cooling and electrical infrastructure).

In parallel, AI workloads are driving a sharp rise in rack power density as GPUs grow more power hungry and more tightly coupled. For example, NVIDIA’s announced Kyber racks with Rubin Ultra GPUs are expected to approach ~1MW per rack–a massive 25x leap over previous-generation standards. This rapid densification further stresses power delivery architecture and amplifies the value of higher-efficiency, lower-loss distribution.

The data centre power infrastructure transition

Legacy AC‑powered infrastructure is hitting a thermal and physical ceiling, unable to support next‑generation AI clusters. Much like liquid cooling transitioned from a premium offering to a physical requirement for 100kW racks, direct‑current (DC) distribution is becoming a necessity for megawatt‑scale deployments. The industry is converging on 800V DC architectures to minimise cabling bulk, drastically reduce conversion losses, and enable unprecedented power densities. While data centres typically interface with Medium Voltage (MV) AC lines (10kV–34.5kV), the path to 800V DC varies. Two primary emerging rack standards are NVIDIA’s monopolar 800V DC end‑to‑end architecture and OCP’s Mt. Diablo ±400V DC specification.

Strategies for the transition range from whitespace retrofits to hybrid configurations. However, DC‑native solutions are expected to ultimately prevail, offering superior efficiency, optimized whitespace utilization, and reduced CapEx.

To facilitate the discussion, we propose a framework[3] describing the evolution of data center power architecture from today’s AC infrastructure to the ultimate DC‑native solution. Each stage of this evolution streamlines the power path, reducing component count while maximising efficiency gains and grid compatibility for high‑density AI clusters[4].

Stage 0: AC infrastructure (current state):

Based on 415V/480V AC topologies with traditional UPS for short‑duration backup. Compute racks receive AC input and perform internal conversion to DC, leading to multiple efficiency bottlenecks. Capped at around 200kW per rack.

Stage 1: White Space Retrofit:

Introduces 800V DC racks while preserving existing AC infrastructure. An AC/DC ‘Sidecar’ unit in the white space handles the conversion. While this method enables high‑performance compute, it suffers from high cumulative conversion losses and consumes valuable floor space.

Stage 2: Hybrid Power Distribution:

Utilizes traditional transformers but introduces a DC UPS, connected in parallel to the 800V DC bus. During normal operation, power flows directly from source to load without passing through energy storage. This eliminates the 5–8% continuous loss of double‑conversion AC UPS systems. This stage begins to streamline the power path but retains the complexity and footprint of legacy AC components.

Stage 3: Basic DC‑Native:

The first truly DC‑native configuration. A Solid State Transformer (SST) connects to MV and replaces the AC/DC conversion stage. While this significantly improves efficiency and allows a parallel DC‑UPS to provide both backup power and active peak shaving, the architecture often relies on additional hardware. A step‑down transformer is frequently required to bridge higher grid voltages (typically up to 34.5 kV) to the SST’s working voltage, and separate low‑voltage (LV) distribution units are sometimes necessary. In this configuration, SST efficiency typically peaks at 98.5%[5].

Stage 4: Integrated DC‑Native:

A high‑efficiency SST (>99%) connects directly to 34.5 kV AC and integrates DC distribution and native DC‑UPS capabilities into a single platform. By eliminating the need for intermediate step‑down transformers and separate LV distribution units, this fully integrated system provides seamless, granular power control and ultra‑fast response to both load demands and grid fluctuations.

SolarEdge data centre power solution

Building on 20 years of DC power electronics expertise, including six years of dedicated MV SST development, SolarEdge is engineering its core scientific breakthroughs into a holistic power platform for AI data centers. The design of the solution is intended to include the following key components:

- Ultra‑High Efficiency SST (>99%): the Solid State Transformer will aim to interface directly with up to 34.5 kV MV lines, delivering stabilized 800 V–1500 V DC to the racks, minimizing conversion losses to unlock more revenue‑generating compute.

- Native DC‑UPS: will aim to connect to the DC bus, eliminating AC‑DC‑AC conversions for maximal efficiency with seamless backup and peak shaving. Highly flexible, it is designed to allow independent, per‑deployment configuration of battery chemistry, capacity, and physical placement.

- Integrated Intelligent Distribution: this smart DC‑power layer will aim to actively manage power delivery by combining per‑channel monitoring with localised control. It will aim to dynamically adapt to varying loads while ensuring enhanced safety, including rapid fault isolation.

According to the maturity framework, the SolarEdge solution is intended to represent a Stage 4 architecture. By combining high‑efficiency SST technology with integrated DC distribution and a native DC‑UPS, the system aims to achieve state‑of‑the‑art total efficiency while meeting the extreme response‑time requirements of modern AI workloads.

The following section examines SolarEdge Solid State Transformer – the cornerstone of this platform. Subsequent documentation will explore other system components as well as critical design considerations for the next generation of power‑dense data centres.

SolarEdge SST intends to address the extreme needs of AI workloads, including:

- Operating efficiency exceeding 99%: with efficiency well above current industry benchmarks, the SST aims to minimise conversion losses and subsequent cooling requirements. By reclaiming power that was previously lost to conversion and cooling, operators will be able to deploy additional compute within the same utility or on‑site generation envelope – transforming efficiency into a direct revenue driver.

- Comprehensive Grid Support: providing ultra‑fast response to grid transients. The SST will be designed to perform active harmonic filtering, ensuring superior power quality and grid compliance without the need for external hardware.

- Modular, scalable design: delivered in 2–5MW modular units with cell‑level redundancy, the design eliminates single points of failure at both the component and system levels to ensure maximum uptime.

- Flexible and future proof: the SST platform is designed to support Medium Voltage (MV) inputs up to 34.5kV and pre‑configured DC outputs from 800V to 1500V (and could also support OCP’s ±400 V), in line with current designs and future high‑voltage industry roadmaps.

The DC expertise AI data centres need

The transition to 800V DC architecture in AI data centres requires unique expertise in DC power system design and management. With 20 years of leadership in DC power electronics, SolarEdge is positioned to seamlessly bridge this gap. Technologies perfected for distributed energy systems – such as granular power tracking, advanced string management, and rapid arc‑fault detection – translate naturally into the rigorous rack‑level power control and safety protocols demanded by high‑density AI workloads.

Beyond advanced engineering, securing AI power infrastructure requires deep system visibility and massive operational capacity. Drawing on experience from the real‑time monitoring of millions of DC‑coupled systems across more than 140 million units worldwide, the SST platform is engineered specifically to meet the stringent uptime targets of modern data centers. As these facilities reach gigawatt scale, proven manufacturing execution becomes critical. Leveraging a resilient supply chain and a track record of 60 GW+ in globally deployed power electronics, SolarEdge is positioned to deliver this 800V DC infrastructure at scale and on time.

Dafna Granot, Meir Adest and Igor Morozov are with SolarEdge.

[1] Nvidia, Energy for tokens: thermal and power innovations, 20

[2] McKinsey Data Center Demand Model, 2024

[3] Inspired by the framework presented in Nvidia’s paper: “800 VDC Architecture for Next-Generation AI Infrastructure”, 2025, with additional refinement of the DC-native solution.

[4] All system efficiencies measured from 34.5kV MV grid to 54V DC compute tray bus. VRMs and cooling overhead excluded as they are consistent across architectures.

[5] According to commercially announced SST products from leading vendors, as of Q1 2026

Engineer News Network The ultimate online news and information resource for today’s engineer

Engineer News Network The ultimate online news and information resource for today’s engineer